Ai Solution

Home > 제품소개 > Ai Solution

Mustang-M2BM-MX2 [ SAMPLE ]

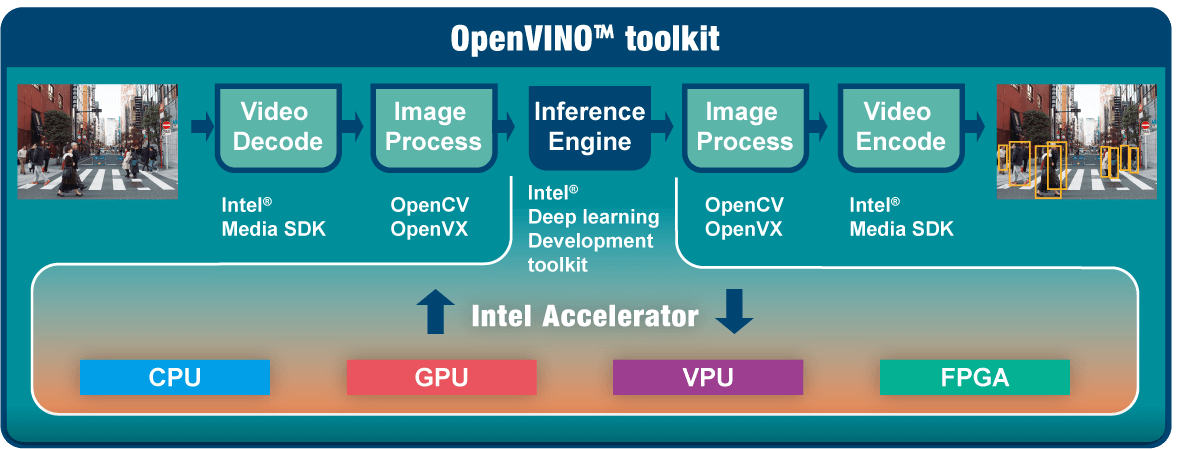

Intel® Distribution of OpenVINO™ toolkit is based on convolutional neural networks (CNN), the toolkit extends workloads across multiple types of Intel® platforms and maximizes performance.

It can optimize pre-trained deep learning models such as Caffe, MXNET, and ONNX Tensorflow. The tool suite includes more than 20 pre-trained models, and supports 100+ public and custom models (includes Caffe*, MXNet, TensorFlow*, ONNX*, Kaldi*) for easier deployments across Intel® silicon products (CPU, GPU/Intel® Processor Graphics, FPGA, VPU).

It can optimize pre-trained deep learning models such as Caffe, MXNET, and ONNX Tensorflow. The tool suite includes more than 20 pre-trained models, and supports 100+ public and custom models (includes Caffe*, MXNet, TensorFlow*, ONNX*, Kaldi*) for easier deployments across Intel® silicon products (CPU, GPU/Intel® Processor Graphics, FPGA, VPU).

•Operating Systems

Ubuntu 16.04.3 LTS 64bit, CentOS 7.4 64bit,Windows® 10 64bit

•OpenVINO™ toolkit

◦Intel® Deep Learning Deployment Toolkit

- Model Optimizer

- Inference Engine

◦Optimized computer vision libraries

◦Intel® Media SDK

•Current Supported Topologies: AlexNet, GoogleNetV1/V2, MobileNet SSD, MobileNetV1/V2, MTCNN, Squeezenet1.0/1.1, Tiny Yolo V1 & V2, Yolo V2, ResNet-18/50/101

* For more topologies support information please refer to Intel® OpenVINO™ Toolkit official website.

•High flexibility, Mustang-M2BM-MX2 develop on OpenVINO™ toolkit structure which allows trained data such as Caffe, TensorFlow, MXNet, and ONNX to execute on it after convert to optimized IR.

| Model Name | Mustang-M2BM-MX2 |

|---|---|

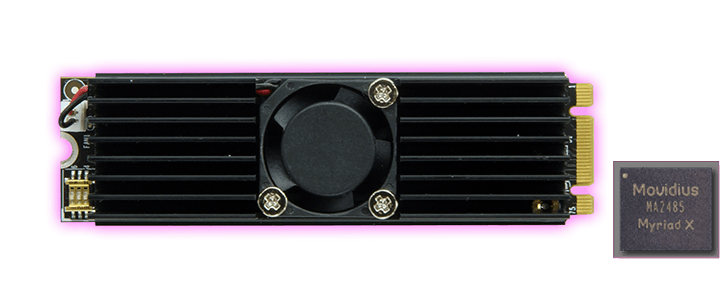

| Main Chip | 2 x Intel® Movidius™ Myriad™ X MA2485 VPU |

| Operating Systems | Ubuntu 16.04.3 LTS 64bit, CentOS 7.4 64bit, Windows® 10 64bit |

| Dataplane Interface | M.2 BM Key |

| Power Consumption | Approximate 7.5W |

| Operating Temperature | -20°C~60°C |

| Cooling | Active Heatsink |

| Dimensions | 22 x 80 mm |

| Operating Humidity | 5% ~ 90% |

| Support Topology | AlexNet, GoogleNetV1/V2, MobileNet SSD, MobileNetV1/V2, MTCNN, Squeezenet1.0/1.1, Tiny Yolo V1 & V2, Yolo V2, ResNet-18/50/101 |

| Part No. | Description |

|---|---|

| Mustang-M2BM-MX2-R10 | Deep learning inference accelerating M.2 BM key card with 2 x Intel® Movidius™ Myriad™ X MA2485 VPU, M.2 interface 22mm x 80mm, RoHS |